As every field rushes to grapple with AI’s implications, we’ve witnessed flashes of its potential—some deeply disturbing, others promising, even joyful. Technologies like ChatGPT, DALL-E, Midjourney, and Boston Dynamics’ robotics have created a landscape where the future feels simultaneously closer and more alien than ever. The conversation surrounding AI is far from settled. Questions abound: Is it a restrictive apparatus, limiting human offerings or engagement with the world? A prescriptive or deterministic mechanism, ever narrowing or funneling toward one end, closing the world of possibilities around us? A doom bringer or brain destroyer—the ultimate tool for the lazy and uninspired?

I come to this discourse as a designer and teacher trained in architecture, a role that is both subjective and objective, quantifiable and qualitative. I often play mathematician, technician, and creative spirit who cares equally for beauty as for logic and reason. It’s from this dissonant viewpoint that I have felt the whiz of AI brush past me, sometimes exhilarating, sometimes disorienting. Yet within this rush, and through my own engagement with these algorithms, I have begun to find clarity about some of these anxieties and hopes within my own work. It’s from this perspective— not as a prophet or a pessimist, but as a participant who sees AI as a prosthetic—that I want to offer a reflection on AI’s potential.

Contrary to the narratives that are often put forth by techno-optimists, I believe that AI’s greatest promise doesn’t lie in speeding up labor or mimicking human creativity but in helping us unlearn, forget, and speculate more boldly. Rather than viewing AI merely as a replacement or accelerator, I see it as a tool for recovering sympathy, contradiction, nonconscious thinking, and relational aesthetics—those parts of human experience that industrial and informational cultures have historically suppressed.

The question is not whether AI will "take over," but whether we will allow it to help us access older, slower, more ambiguous, and more fertile ways of engaging with ourselves, each other, and the world. This project demands care, criticality, and imagination—and it demands that we resist the narrowing tendencies of both market logic and technological determinism that dominate so much of discourse today.

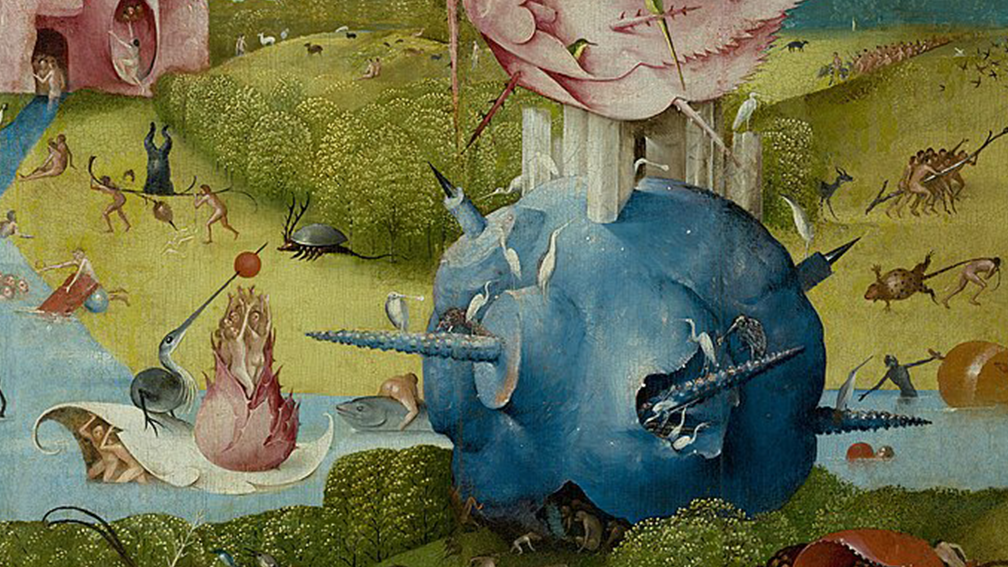

While debates rage over the ultimate value of AI-generated artworks, one recurring theme emerges across many critical conversations: the process of making still matters. I can imagine the backbreaking work of a sculptor, the toiling hours of a painter, the violent gestures of an abstract expressionist at their canvas. I can feel these things, even without ever having touched that marble or brush myself. As a lover of art, I’m moved by Auguste Rodin’s sculptural works, but even more so by his plaster molds, his cast tests riddled with seams and imperfections. They reveal the hidden labor, the mistakes, the struggle underneath the marble’s polished surface. They show not just the finished idea but the process of grappling itself.

This is the sympathetic bond of art: a non-verbal, almost somatic connection that ties us, empathically, to others’ experiences. Nelson Goodman reminds us, in Ways of Worldmaking, that our very perceptions are shaped by history, need, and prejudice: “Not only how but what it sees is regulated by need and prejudice... Nothing is seen nakedly or naked.” We do not passively receive artworks; we seek something in them. Sympathy, struggle, human presence are all actively projected into our seeing.

When AI produces an artwork—particularly when it does so without any labor we can imagine or access—this sympathetic bond risks being severed. The feeling of connection, the intuition that someone else’s experience pulses beneath the surface, can be lost. Imagine the dissonance of believing you are engaging with another human’s struggle or triumph, only to learn it was the output of a convoluted algorithm, hyper-indexical and cold. For many viewing these artworks, this discovery feels like a betrayal.

For artists, the risk is amplified. The initial instinct by industry has been to treat AI as a tool for technical acceleration. Within the dominant economic system, speed is treated as a virtue—often the primary one—because it maximizes output, visibility, and profit. It is an ideology that thinks that the function of the artist, the writer, the craftsperson is simply to move faster: to output more, to meet the pace of machine-assisted production. Yet it isn’t just the fear that AI will render the artist’s labor invisible or economically unsustainable that we can sense. There is the added concern that when the relationship between time investment and value is severed, the sympathetic bond begins to erode. The act of making is flattened into mere output. While the material threats that AI pose are real, as an architect, I’m equally drawn to the question of what AI might mean for the spirit of creation.

I must begin by confessing that instead of feeling betrayed by generated imagery, I’ve often found myself captivated. Even when the result feels kitschy or derivative. I’m drawn to the alien expressiveness at work in AI imagery, how it functions as a signal from another mode of thought. The image refracts our own ways of seeing—sometimes distorting them, sometimes clarifying them, but always asking us to look again. In my own work, the power of AI lies not in its ability to replicate known forms, but in its capacity to generate confusion—strange new hybrids that evade simple referentiality. There is a pervasive belief that because AI is trained on referential material—human language, images, and data—its outputs must necessarily be referential as well. In truth, artistic processes integrating AI can gestate material that destabilizes reference as easily as they can reinforce it.

To understand this, it is helpful to turn to Daniel Heller-Roazen’s meditations on language itself. In Echolalias, he writes that “nowhere is a language more ‘itself’ than at the moment it seems to leave the terrain of its sound and sense.” Language becomes most alive, most itself, precisely at the moment it teeters on the brink of nonsense. A similar sentiment is echoed in Katherine Hayles’s exploration of cognition beyond conscious thought through Peter Watts’s novel Blindsight. In the story, the protagonist Siri Keeton undergoes a radical hemispherectomy and subsequently loses the natural ability to intuit meaning. To compensate, he retrains himself by studying micro expressions and "information topologies," learning to infer meaning through patterns rather than instinct. Confronted with the strangeness of this mechanical empathy, Keeton reflects, “people simply can’t accept that patterns carry their own intelligence.” Hayles uses this moment to highlight a crucial idea: intelligence is not confined to conscious deliberation but also emerges from ambient, patterned, and latent interconnections. This domain of nonconscious cognition operates not through explicit, referential understanding, but through associative, relational processes that unfold beneath the surface.

This insight is crucial for rethinking the role of AI in artistic practice. Rather than treating AI as a representational engine—one that simply reflects or amplifies known realities—we might understand it as an agent of nonconscious patterning. In this view, AI becomes a prosthesis for intuition, a mechanism through which submerged cognitive processes—those ambient, patterned, and associative logics—can be exteriorized and interacted with. It stirs up dormant connections, speculative leaps, and configurations that our habitual categories might otherwise foreclose. This doesn't mean AI offers access to some universal unconscious, à la Jung, but that it models an alternate, distributed mode of cognition—one that operates not through self-awareness but through correlation, recurrence, and relational inference. In this light, the most creative outputs of AI are not failures of reference but demonstrations of a deeper linguistic truth: meaning arises most vibrantly when it is unstable, slipping just beyond fixed denotation.

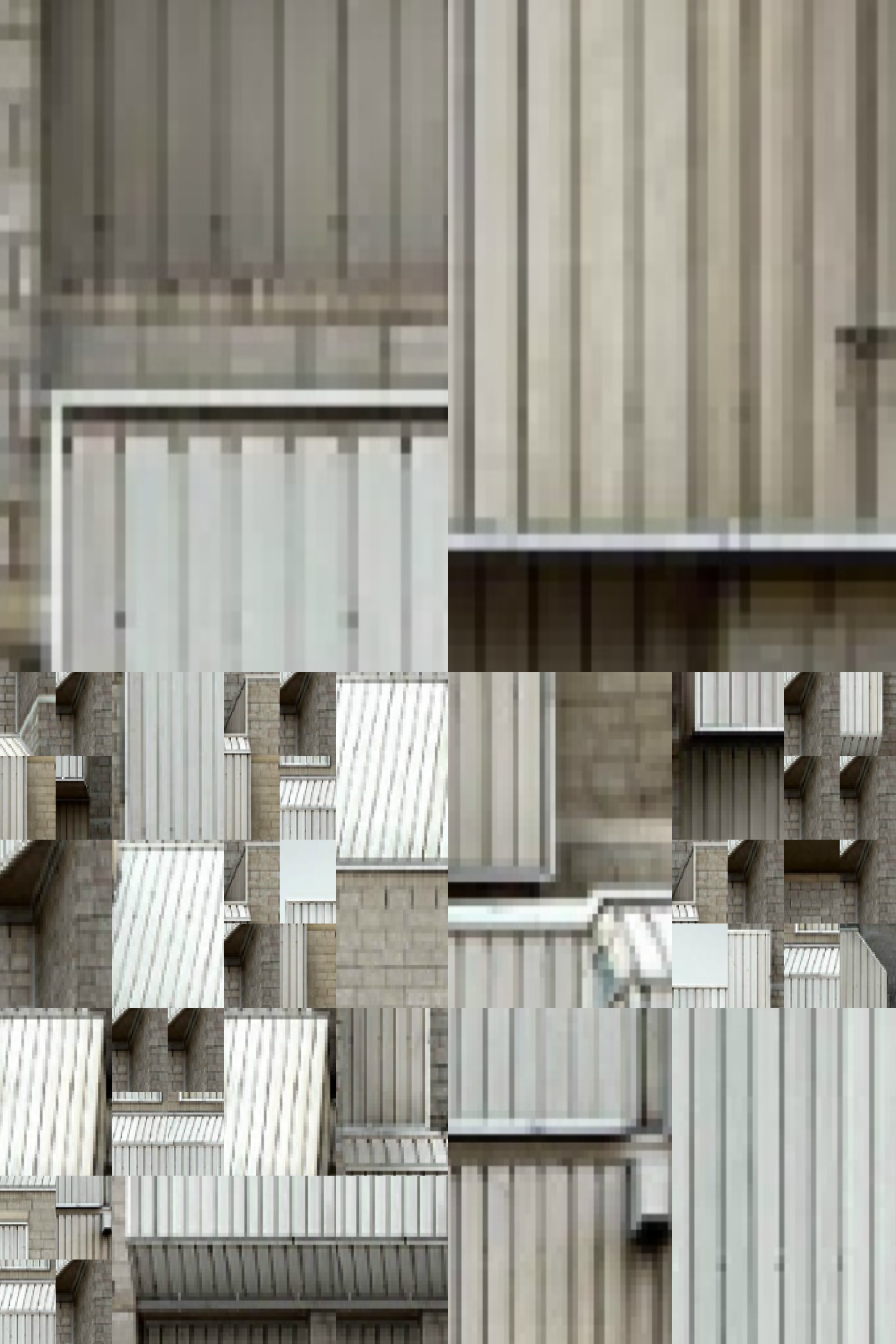

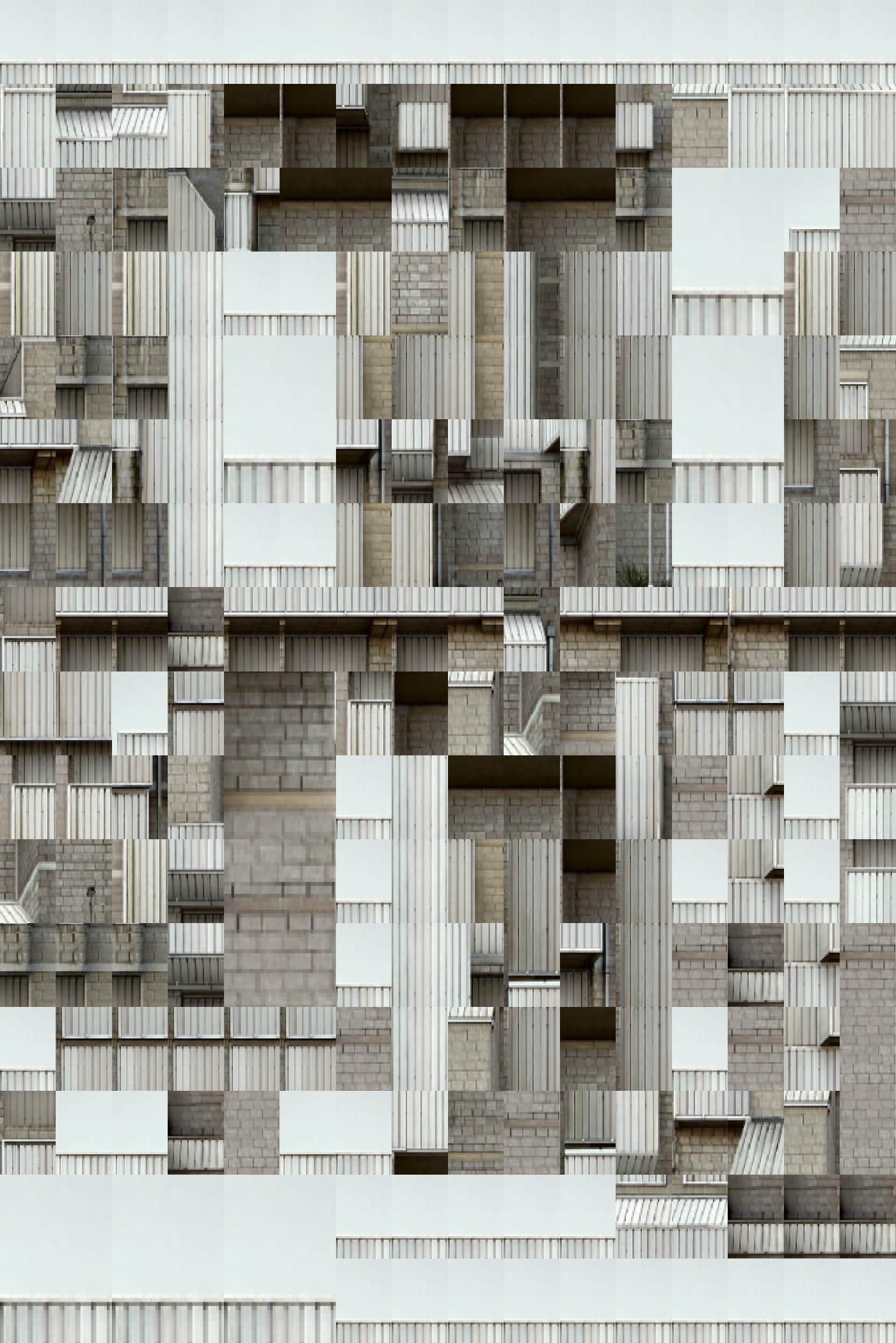

To make these thoughts evident, I have developed an image series exploring the detachment and defamiliarization made possible through AI models. In my image series This, But That and This, Like That, images are constructed through a perception-based tiling system—a quadtree subdivision method that uses mean squared error and standard deviation of pixel values within each tile to determine whether further subdivision is warranted. This process discretizes image structure and draws on techniques common in image compression, pairing visual detail with computational efficiency. The result is a mosaic-like image built from nested units of varying size and granularity. Each tile becomes a modular fragment whose aesthetic and semantic significance fluctuates depending on its context.

These compressed mosaics are not just formal exercises. They resemble Thomas Ruff’s over-compressed photographs in that they stage a degradation of reference: images made strange through data loss and algorithmic intervention. What remains is not a clear depiction of an original scene but a vague residue of form and color—an impressionistic shell shaped by technical thresholds. The image becomes not a representation, but a field of transformation.

The quadtree, then, functions as a patchwork generator: a system for organizing fragments into a relational ecology. Each tile holds different visual and semantic weight, contributing to an emergent syntactic network. Discretization becomes the first gesture of defamiliarization—it fractures legibility, defers recognition, and opens space for ambiguity. The familiar becomes strange, not through abstraction alone but through recomposition. Meaning is not given; it is distributed, unstable, and contingent.

This fragmentation allows for what I call “ecologies of parts” or “metaphorical assemblies.” In This, But That, selective deletion removes portions of the image, asking the remaining tiles to carry the perceptual and aesthetic burden of the whole. This disrupts the holistic reading of the image and foregrounds the relational mesh that binds its parts. In This, Like That, fragments from one image are used to construct another, reterritorializing material from one semantic context into an entirely different visual grammar. Tiles that once carried concrete referents are cast into new arrangements, stripped of their original context and forced to signify otherwise—or not at all. The result is a kind of “visual daisy-chaining,” where the semantics of the original image dissolve into a new syntactic structure defined by pattern, tension, and ambiguity.

This practice finds a kind of kinship in the behavior of certain AI systems—variational autoencoders, single-shot learners, object detection models—which can function not only as tools of recognition but as engines of defamiliarization. These models allow artists to access a space of semantic forgetting, a perceptual zone in which the referent slips away and is replaced by strange, emergent orderings. This is not an error but a productive rupture: an aesthetic logic built from parts, fragments, and disassemblies. Meaning here is constructed relationally, not representationally.

In this sense, AI is not simply a machine for generating images but a prosthetic for rethinking composition itself. It invites a shift from representational fidelity to speculative assembly, from semantic clarity to syntactic play. Like the assemblages of Manuel DeLanda, these works operate through territorialization and emergence—where parts do not illustrate wholes but participate in their construction. Figures arise not through resemblance but through relational binding. They are legible not because they mirror the real, but because they activate our capacity for perceptual and conceptual inference.

AI, therefore, can be more than a mirror reflecting back the world as we know it. It can be a prism, refracting the known into the unknown, pushing us into territories where referents collapse and fiction takes root. It allows us to encounter not just what is, but what might be or never was. And crucially, this process of fictionalization—of moving beyond the familiar—is not an accident or error. It is where the true promise of AI in artistic processes lies: not in the efficiency of reproducing what already exists, but in the speculative rupture that makes room for the not-yet-imagined.

In the tale of Abu Nuwas, after memorizing a thousand lines of ancient verse, the famed poet is told by his master to forget them entirely before composing his own poetry. Only after some time when he proclaims that he has forgotten them completely does the master reply, “Now go compose!” Only through this act of obliteration—of severing reference, of unbinding himself from the strictures of memorized knowledge—can he truly create.

Like Abu Nuwas, artists are steeped in immense corpuses of reference material: images, texts, sounds. But true invention requires not mechanical recombination of those materials, but a selective forgetting—a capacity to move beyond inherited forms and generate new structures out of relational ambiguity. In this sense, AI can serve as a tool of creative forgetting—but it rarely does so by default. AI more often reproduces dominant aesthetic, linguistic, and cultural patterns, reinforcing the very referential systems it has been trained upon. AI is not a neutral field; because it is trained on human materials using human-made algorithms, it inherits and reproduces the dominant structures of its source data. What might be mistaken for the emergence of a “collective unconscious” is often just the echo of what has been most frequently encoded: white, Western, heteronormative, patriarchal norms disguised as statistical averages.

The task, then, is to approach AI not as a passive engine of production, but as a speculative instrument—one that, when critically and creatively engaged, allows us to press against the grain of recognition. Donna Haraway’s call to “stay with the trouble” becomes relevant here. It’s essential that we don’t embrace the output of AI uncritically, but rather work within and against them—to trouble their inheritance, to use them for speculative estrangement rather than passive reflection. Speculative, non-representational uses of AI—those that acknowledge their fictional, metaphorical nature—honor its promise of ambiguity and nonconscious interconnection. When used with intention, AI can help externalize and accelerate our efforts to move beyond referential constraints, inviting us into the latent spaces between fixed categories. It opens the possibility—though not the guarantee—of rediscovering what Heller-Roazen calls the “true homeland” of speech: exile, displacement, the generative instability where creativity thrives.

Though there has been plenty of ink spilled regarding all we stand to forget due to our increased reliance on AI, writers like Heller-Roazen remind us that forgetting has a positive dimension as well. Precisely because pattern-based generation unsettles our reliance on conscious reference, it can also open ethical and imaginative pathways. In Vibrant Matter, Jane Bennett argues for a heightened sensitivity to the vibrancy and agency of objects and processes, urging us to appreciate the subtle, emergent qualities that escape categorical capture. Applied to AI, Bennett’s ethic of attention demands that we value the strange, the contradictory, the flickering moments where AI-generated work refuses stable meaning and invites wonder instead. To pay attention to these qualitative moments in the output of AI processes is to resist the drive toward instrumentalization and misrepresentation. It is to honor AI’s capacity for sympathetic discovery—not by pretending it thinks or feels as we do, but by recognizing the new terrains of association, forgetting, and reimagining it can catalyze.

Ultimately, the most profound role of AI may not be as a producer of finished artifacts or efficient outputs, but as a companion in the ongoing human project of discovering the hidden sympathies of the world. It invites us into new relational fields, where memory and forgetting, reason and nonconscious patterning, reality and imagination intersect in ever-shifting ways. There’s little doubt that AI will lead us to forget. But fear of technologically-induced forgetting goes back as far as Plato, who distrusted writing for those same reasons. What matters is that we choose to forget in ways that do not diminish ourselves, but rather extend the reach of our sympathies—toward one another, toward the unknown, and toward the fragile, fertile spaces between. ◼