There is a tendency to remember Y2K as the apocalypse that wasn’t. Media reports at the time ranged from cautiously skeptical to outright hyperbolic (Newsweek ran a cover story called “The Day the World Crashes”). Religious leaders declared the event biblically preordained, comparing “advanced computer technology” to the Mark of the Beast. There were Y2K-branded survival guides, from both anti-government conspiracy theorists and left-leaning institutions like the Utne Reader. A made-for-TV disaster film, aptly called Y2K: The Movie, featured Ken Olin as a “Y2K troubleshooter” battling a panoply of catastrophes, including a nuclear meltdown near Seattle. When Olin’s daughter cries, “I’m so sick of Y2K!” she could have been speaking for many.

Whether you remember the pre-millennial era with residual anxiety or — perhaps more likely — with laughter, you are probably recalling all the doom-mongering, followed by the anticlimax. Or perhaps you are one of the many people for whom “Y2K” has come to refer to a moment in culture and fashion, rather than a computer crisis. On the fateful night itself, as the counting reached zero-zero, people downed drinks and embraced their loved ones under explosions in the sky — maybe, for some of them, out of relief, but mostly because that’s what you do on New Year’s Eve. It was pretty obvious pretty quickly that the world had not ended.

Two hours into the year 2000, John Koskinen, the head of President Clinton’s Year 2000 Conversion Council, stood before the press, explaining why the world hadn’t ended. Over the following hours and days, Koskinen would hit the same basic details in press briefing after press briefing, walking the line between calm reassurance and insistence that yes, there really had been a problem in the first place — a problem that Koskinen, along with countless others, had spent years working tirelessly to fix. He was “pleasantly surprised” with how well the turnover had gone (although quite a few problems had occurred, then been caught and quickly fixed). Testifying at Congress’s final Y2K-related hearing at the end of January, Koskinen responded to general accusations that, in his summary, “Y2K was an insignificant problem, hyped by the media, computer consultants and those with other reasons for hoping the world as we know it was about to end.” The lack of calamity, he stressed, was not due to chance, but to years of serious and sustained work to avert it. An assessment which, to be clear, was shared by the bipartisan members of Congress at that hearing.

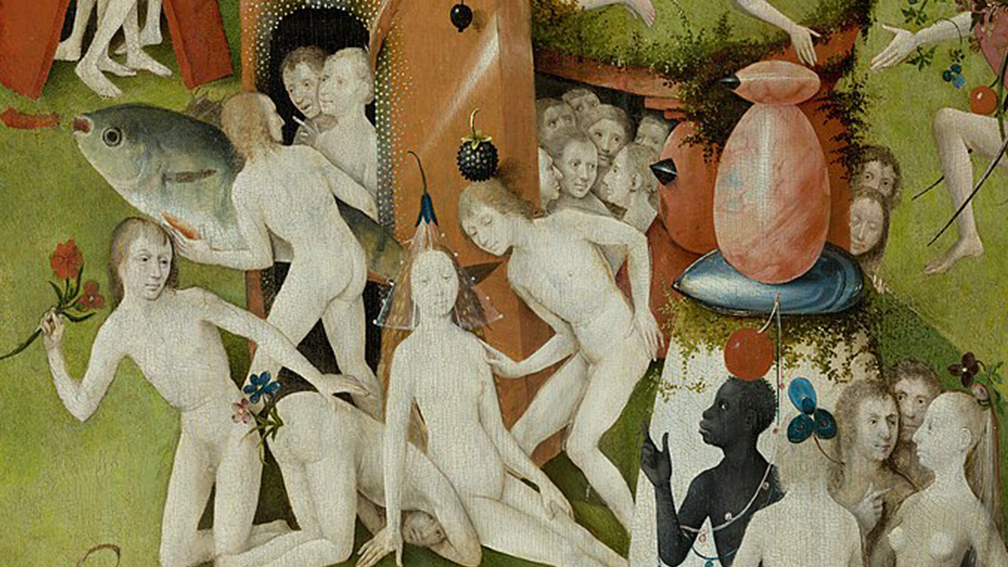

The truth was, before the year 2000, nobody knew exactly what would happen, or how bad it would be. Most Y2K experts in the lead-up to the changeover were predicting something akin to a decidedly non-apocalyptic “bump in the road.” But all they could say was that some disruption, of unknown degree, was possible; and that’s better than guaranteeing catastrophe, but not necessarily more reassuring. Predictions of impending doom tend to feature an alluring mixture of easy-to-imagine imagery, coupled with an appealing sense of certainty. All-out cataclysm is easier to imagine than a slew of technical issues; the dashing hero averting doom at the last second is more fun to picture than an army of IT professionals spending years dutifully tapping away in front of their computers.

But there was another major crisis at the heart of Y2K, one less remarked on, and less bombastic than apocalypse at the ball drop, but no less serious; and, more perturbingly, never truly remedied. It wasn’t the possibility that the world would end when 1999 became 2000. It was the fact that the world people thought they knew had ended already.

In computing’s early days — back when a computer was still a room-sized, very expensive mainframe machine that relatively few people had access to — computer memory was precious. Given the expense of the machines themselves, management was eager to save money wherever they could. Thus, various computer professionals hit upon the idea of saving memory (in other words: saving money) by truncating dates: lopping off the first two century digits, so that 1960 would be recorded simply as 60. This solution was widely adopted because it worked: the date-related calculations that computers often performed didn’t need those two century digits; it was in keeping with the way people generally talk about decades; and it did save money. After all, 1999 minus 1939 equals 60, and 99 minus 39 also equals 60.

Of course, one didn’t need to be blessed (or cursed) with the power of foresight to anticipate problems when those century digits ceased to be 1 and 9. After all, 2000 minus 1939 equals 61, but 00 minus 39 does not. The anxiety-inducing question at the core of Y2K was: What exactly would happen when computers tried to perform routine calculations and started generating numbers like negative-61? And the even more anxiety-inducing answer was: it’s not entirely clear.

The computer scientist Bob Bemer first tried to draw attention to the problem in the Honeywell Computer Journal back in 1971. Nevertheless, the IT community, as a whole, remained generally assured that someone else would fix the issue before it truly became a problem. The 1970s and 1980s saw some significant advances in computer hardware, but much of the underlying code remained the same. It was not until the 1990s that specialists mounted an organized effort to address the situation, by which point they had their work cut out for them.

What most of the technical experts agreed on was that a variety of things could happen when computers began to encounter those strange dates. There was the dreaded scenario, in which the problematic dates lead to programs terminating or failing, and actual computer systems shutting down. There was the annoying scenario, in which the computer systems kept working more-or-less as normal, but started to fill up with garbage data because of those incorrect date-related calculations — which, in time, could trigger the sorts of failures outlined in the previous scenario. Lastly, there was the ideal scenario, in which nothing of note happened at all.

The problem was that it wasn’t clear which of these three scenarios awaited any particular computer system. Alas, with computer systems depended on for everything from national defense to banking to literally keeping the lights on, no company (and no nation) could afford to simply sit back and hope for the best. Unfortunately, the only way to know which of those three scenarios was most likely was to go in and test the code — and there was a lot of code to go in and test. The software engineer Capers Jones estimated that, in the US alone, there were over 1.7 billion function points that needed to be checked and potentially repaired — appearing in a range of systems, and being written in a host of different computer languages.

Computing had infiltrated the world to a degree that early computer engineers would have blushed to imagine, and this left later engineers scrambling to fully assess the extent. The world’s computerized infrastructure had sprawled and tentacled so vastly that it took a herculean effort to even begin to map it. As IT experts got to work on the problem, they found themselves tangling with a host of broader issues around insufficient documentation, deferred maintenance, and a lack of accurate assessments regarding what sorts of computer systems (and what programming languages) various enterprises depended on. The author and programmer Ellen Ullman summarized the problem in an essay called “The Myth of Order.” (It appeared in an issue of Wired with an all-black cover, upon which, in shiny black text, the words “Lights Out: Learning to Love Y2K” appeared.) The crisis, Ullman wrote, revealed something that “technical people” had known for years: “that a computer system is not a shining city on a hill — perfect and new — but something more akin to an old farmhouse built bit by bit over decades by nonunion carpenters.”

Personal computer usage had surged in the 1990s, especially in the US, driven by cheaper machines and interest in the nascent web. But regardless of whether or not a person used a computer at home or at work, their life was bound up with all manner of unseen computer systems. In 1998, a Senate Special Committee was assembled to consider Y2K’s ultimate impact on utilities, healthcare, telecommunications, transportation, financial services, general government, general business, and litigation — all of which might be affected by the Y2K crisis. “Businesses in today’s world rely on computer systems for virtually every aspect of their operation,” said Senator Robert Bennett, the committee’s chair, “from running elevators to calculating interest on loans, to launching satellites.” In other words, a person did not need a computer in their personal life for their personal life to be heavily dependent on computers.

The “Year 2000 Technology Problem” raised some truly disturbing possibilities, the question of whether the world would blow up on New Year’s Day being among the most far-fetched. Frankly, most serious Y2K experts saw the doomsday yammering as a counterproductive distraction from the actual work that needed to be done. The more reasonable, though just as unsettling questions were: What if the life we lead, and the world we see around us, is largely dictated by powerful computer systems that we don’t even realize are there? What if these systems are so wide-reaching, so fundamental, but so ultimately piecemeal that even the experts don’t fully understand them? What if the computers have already taken over?

There were lessons to be learned from Y2K, many of which were both important and rather bland: computer maintenance matters; having a sufficiently large IT department is important; seemingly unimportant tasks like cleaning up database entries are necessary; hardware and software updates cannot be put off indefinitely; old code persists; and companies and institutions need to be aware of the underlying computer systems that support what they do. The higher-order, and more unsettling lesson was that even with tremendous coordination, we can’t actually extricate ourselves from these computer systems.

1999 was the year of peak public anxiety about Y2K. (Ironically, by that time, most of those working seriously on the problem had concluded it was being sufficiently handled.) 1999 was also the year the first Matrix movie arrived in theaters, featuring a group of super-sleek pseudo-hackers battling a totalizing, and totalitarian computer system. In contrast to the Terminator franchise, computer dominance in The Matrix did not look like a field of human skulls being crushed beneath the treads of a robotic tank. It was a world seemingly like ours, in which people went about their lives completely unaware of the fact that they were, in fact, floating in vats, acting as batteries for the machines.

Instead of a standard apocalypse narrative, The Matrix built on a more troubling premise: that the real world is something entirely different than we think it is. Y2K, in effect, proved that to be the case. True, people were not suspended in strange pods with computer cords rammed into the backs of their heads. But Y2K made it quite clear that people were inextricably connected to computer systems, whether they realized it or not.

In The Matrix, it requires a mythical “red pill” to see the world for what it really is. In the years since the movie’s release, that term has taken on some unfortunate right-wing connotations; but if we stay close to its original usage, it seems fair to say that Y2K should have been a “red-pill” moment. It could have forced societies and individuals to reckon with what the world had become — one so completely dependent on computer systems that it risked total collapse if these systems stopped working. Many people, content with Y2K’s successful management, opted for the blue pill instead.

“We discovered we were more dependent on technology than we had thought,” said Cathy Hotka of the National Research Foundation, testifying, with Koskinen, before Congress in January 2000. At that same hearing, Gary Beach, publisher of CIO Magazine, emphasized that thanks to Y2K, “There is now widespread awareness of how pervasive technology is in everyone’s lives, not just those of the digital elite.” The tragedy is that nothing much became of these lessons. The year 2000 arrived, followed by the year 2001, and so on, but the reckoning never really did. Planes did not fall from the sky, nuclear power plants did not melt down, the world did not end. But the world as many thought they knew it — a world not hopelessly dependent on computer systems — already had. ◼